By now, we’ve all heard about the Task Parallel Library (TPL) introduced in .NET 4.0. TPL is really neat and goes a long way in making parallel programming palatable for a bigger slice of developers. With .NET 4.5, the TPL team actually went another step ahead and built a little known library called Parallel Dataflow Library.

Parallel what… you ask?

Yeah well, the naming isn’t very helpful and nor has it been blogged about much! But the Parallel Dataflow library is actually quite powerful. It leverages the TPL to provide us with a nice in-process, message passing functionality. This helps in creating a ‘pipeline’ of ‘tasks’ when we are trying to build asynchronous applications. Confused? Let’s try with an example.

Let’s think of this scenario! You need to process multiple images (some processing resizing/applying Instagram like filters or something else, and then saving the new images). Also the images are going to come into your system intermittently. Once they arrive, you need to process them and put them at a destination location. How shall we go about it?

Well one way is to wait for images to arrive, when they arrive (either one at a time or in a bulk), pick each available image, load it, process it, move it to output and go back to waiting. We can do this in two ways

This as we can see is a monolithic and synchronous action for three potentially independent processes. For every file we are doing these three steps while the next files waits to be considered.

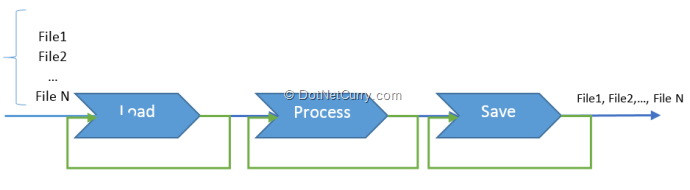

In the second approach, once a file is loaded, the ‘loader’ can handover the image to the ‘processor’ and go back to loading again! Similarly, once a processor has done processing, it can handover the file to the output system to save and go back to processing the next file, ditto with the output processor! So these three tasks can actually be piped together, on the input side we have file or files as they come in, on the output side you have file as it gets done. The above image would get transformed into the following

As we can see, it’s a pipeline of smaller tasks that communicate with each other when data is flowing through time. The Parallel Dataflow Library helps us build these kind of solutions with relative ease. The parallel execution and asynchronous behavior is taken care of by the TPL, on which the Dataflow library is build.

Now that we know what it does, let’s see some of the types and structures that help the Parallel Dataflow Library achieve this.

Parallel Dataflow Library – Programming Model

Continuing with our above example, if we look at the three blocks we have, the first one can be considered a Source Block, the Last one can be considered the target block and the center one can be considered the transformation block that moves data from source to target while doing some action with it.

The Dataflow library uses similar constructs to describe the Interfaces that are used for these blocks. We have three interfaces, the ISourceBlock<TOutput>, ITargetBlock<TInput> and the IPropagatorBlock<TInput, TOutput> where TInput and TOutput are of type ISourceBlock<T> and ITargetBlock<K> respectively.

Just as shown in the diagram, each of these blocks can be linked to the next via the LinkTo method. Fact is, they can be linked to form a Pipe or a Graph (aka Network).

The Parallel Dataflow Library provides lots of Pre-Defined Data Blocks. You can find a comprehensive list of these blocks on MSDN.

Today we’ll build an example using the TransformBlock and the ActionBlock

The ActionBlock<TInput>

The ActionBlock class is a TargetBlock that calls a delegate when data is received. You can think of the entire ActionBlock<TInput> object as a delegate that executes asynchronously.

The TransformBlock

The TransformBlock is similar to the ActionBlock<TInput> class, except that it acts as both a source and as a target. When you pass a delegate to a TransformBlock<TInput, TOutput> object returns a value of type TOutput.

With the basics out of the way, let’s see some code in action.

The Parallel Image Serializer

We’ll continue with our above example and build a sample as follows:

1. A Winforms Application that has a folder picker for input and out folder each

2. Two TextBoxes to show data received vs. data output

3. A File Systemwatcher to push new data into the Pipe

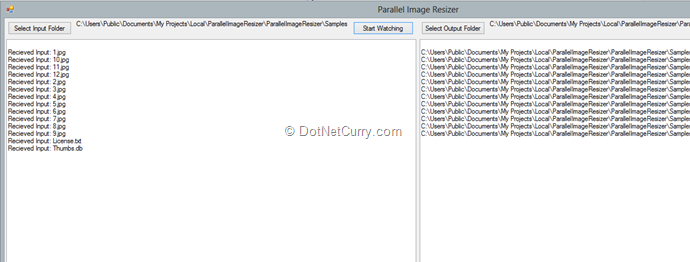

The UI of the application (after first lot has been processed) is as follows

Now let’s say we drop a few more files in this folder. We’ll see they come in the Input side first and then get added to the output.

The Random names are for a reason, we’ll see shortly, but the fact of the matter is that our Pipeline is ready and waiting to process input as it comes in. Today we have implemented FileSystemWatcher to watch for Create Events and on new file creation, it pushes the new file into our pipe for processing. If we copy multiple files, all of them will be processed further.

The Code

I am skipping the code to get the folder names, that’s pretty self-explanatory. Let’s look at how we create the pipe (or network) of Dataflow objects first. The CreateImageResizingNetwork() method builds the network for us.

First it creates a TransformBlock to load Bitmaps. This uses a delegate that takes the input path of the Bitmap as a string and returns an Bitmap object. The delegate internally calls the helper method LoadBitmap(…).

Next it creates another TransformBlock called ResizeBitmap. This uses a delegate that takes a Bitmap as input and returns another bitmap on which the transformation has already been done. This uses the helper method ResizeBitmap to do the resizing of the image.

The third block created is an ActionBlock. It takes the Transformed Bitmap as input and saves it to disk.

ITargetBlock<string> CreateImageResizingNetwork()

{

var loadBitmap = new TransformBlock<string, Bitmap>(path =>

{

try

{

return LoadBitmap(path);

}

catch (OperationCanceledException)

{

return null;

}

});

var resizedBitmap = new TransformBlock<Bitmap, Bitmap>(bitmap =>

{

try

{

return ResizeBitmap(bitmap);

}

catch (OperationCanceledException)

{

return null;

}

});

Random rnd = new Random();

var saveResizedBitmap = new ActionBlock<Bitmap>(returnStatus =>

{

string fileName = labelOutputFolder.Text + @"\" + rnd.Next().ToString() + ".jpg";

returnStatus.Save(fileName);

textBoxOutput.Text += Environment.NewLine + fileName;

Cursor = DefaultCursor;

},

new ExecutionDataflowBlockOptions

{

TaskScheduler = TaskScheduler.FromCurrentSynchronizationContext()

}

);

//

// Connect the network.

//

loadBitmap.LinkTo(resizedBitmap, bitmaps => bitmaps!=null);

resizedBitmap.LinkTo(saveResizedBitmap, bitmap => bitmap != null);

return loadBitmap;

}

Once we have the blocks initialized, we hook them together using the LinkTo method as seen above.

The LoadBitmap method is rather simple as in it creates a new Bitmap and loads it using the filename.

// Loads a bitmap file that exist at the provided path.

private Bitmap LoadBitmap(string fileName)

{

// Load each bitmap for the current extension.

try

{

// Add the Bitmap object to the collection.

return new Bitmap(fileName);

}

catch (Exception)

{

// TODO: handle the error.

}

return null;

}

The ResizeBitmap method takes the incoming Bitmap and puts it in a new Bitmap that’s half the original size. Once resized, the resized image is returned.

// Creates a 50% reduced size bitmap from given Bitmap

private Bitmap ResizeBitmap(Bitmap bitmapIn)

{

// Create a 32-bit Bitmap object with the greatest dimensions.

Bitmap result = new Bitmap(bitmapIn.Width / 2, bitmapIn.Height / 2,

PixelFormat.Format32bppArgb);

using (Graphics g = Graphics.FromImage(result))

g.DrawImage(bitmapIn, 0, 0, result.Width, result.Height);

// Return the result.

return result;

}

All this is fine, but how to we initiate the Pipeline? We do this on click on the ‘Start Watching’ button.

Here headBlock is an instance of ITargetBlock<String>. If it is null, we call the CreateImageResizingNetwork() method to build our network. As you can see, the check for null ensures it is initiated only once for every run of the application.

The start watching event expects the input folder and the output folder are set. And it sets the fileSystemWatcher instance to watch the folder for jpegs.

Once the Image Resizing Network is initialized, we ‘Post’ the file path to network and that kicks it off, where in it loads each file, resizes it and saves it. Once the initial lot of files have been manipulated, the fileSystemWatcher instance is enabled to watch for any new incoming files.

private void buttonStartWatching_Click(object sender, EventArgs e)

{

fileSystemWatcher1.Path = labelInputFolder.Text;

fileSystemWatcher1.Filter = "*.jpg";

if (headBlock == null)

{

headBlock = CreateImageResizingNetwork();

}

// Post the selected path to the network.

foreach (string fileName in Directory.GetFiles(fileSystemWatcher1.Path))

{

textBoxInput.Text += Environment.NewLine + "Recieved Input: " +

Path.GetFileName(fileName);

headBlock.Post(fileName);

}

fileSystemWatcher1.EnableRaisingEvents = true;

}

When the watcher detects a new file has been created, the Created event is fired and here again, it updates the Input textbox with the name of the new file and then checks if our pipeline has been initialized. If so, it simply posts the new file’s path to the pipeline, which will again execute the three steps required to manipulate the file.

private void fileSystemWatcher1_Created(object sender, FileSystemEventArgs e)

{

textBoxInput.Text += Environment.NewLine + "Recieved Input: " +

Path.GetFileName(e.FullPath);

if (headBlock != null)

{

headBlock.Post(e.FullPath);

}

}

The use of FileSystemWatcher is a convenience we have used, it could be a socket for streaming data or file stream for incoming data so on and so forth. Idea is once you have the ‘actions’ pipelined, you can get data ‘streamed’ in any way, and the pipeline would work on it asynchronously as the data comes it.

Conclusion

That was a very quick and brief introduction to the Parallel Dataflow Library of TPL! It is quite extensive with respect to the Blocks that it supports and it’s a matter of cooking up the use case we want to throw at it. I hope to have at least setup the premises under which such an architecture should be considered.

Overall the Dataflow library helps us do ‘in-process’ message passing that can be used for dataflow and pipelining at a much higher level as in with objects in managed code, as opposed to with bits and bytes inside of CPU execution pipelines!

Download the entire source code of this article (Github)

This article has been editorially reviewed by Suprotim Agarwal.

C# and .NET have been around for a very long time, but their constant growth means there’s always more to learn.

We at DotNetCurry are very excited to announce The Absolutely Awesome Book on C# and .NET. This is a 500 pages concise technical eBook available in PDF, ePub (iPad), and Mobi (Kindle).

Organized around concepts, this Book aims to provide a concise, yet solid foundation in C# and .NET, covering C# 6.0, C# 7.0 and .NET Core, with chapters on the latest .NET Core 3.0, .NET Standard and C# 8.0 (final release) too. Use these concepts to deepen your existing knowledge of C# and .NET, to have a solid grasp of the latest in C# and .NET OR to crack your next .NET Interview.

Click here to Explore the Table of Contents or Download Sample Chapters!

Was this article worth reading? Share it with fellow developers too. Thanks!

Sumit is a .NET consultant and has been working on Microsoft Technologies since his college days. He edits, he codes and he manages content when at work. C# is his first love, but he is often seen flirting with Java and Objective C. You can follow him on twitter at @

sumitkm or email him at sumitkm [at] gmail