The concept of DevOps has been explained by many people in different ways. My interpretation of DevOps is ‘Bringing Agility to Operations, in line with Development’. I am going to start with two concepts in that definition that need some explanation and then move on to provide some examples of how Azure supports DevOps.

The first concept in the statement I made about DevOps is Agility in Development. Although it is not a new concept, it still needs explaining to many developers. Agility in general is how quick an entity reacts in a positive way to some change in the environment. One apt example of high level of agility is the fighter aircrafts engaged in a dogfight. The aircraft and the pilot ecosystem has to take constant evasive and attacking actions, in response to similar actions taken by the enemy aircraft. In this example, one has to assume that the aircraft is built to take evasive actions required in the dogfight. If one imagines a passenger or bomber aircraft in place of the fighter aircraft, they will not be able to take those evasive actions. Extending this example to the teams that are developing software development, the teams that can take quick actions on changes detected in environment, like change in a business situation or rules of the business; is an Agile team. Like a passenger aircraft cannot be agile, not every team is Agile. Teams have to change or be recreated as an Agile team. A slow lumbering team cannot become Agile just like that, by following some Agile practices.

The second concept in that statement is Operations (team) and how it interacts with development team. Operations team has the responsibility of:

1. Creating the environment in which the developed application will run and provision it so that it can be used as and when required to deploy the developed application.

2. Ensure the quality of services provided to the customer like availability, performance, scalability, security etc.

3. When maintaining the quality of services requires some action from development team; operations team should provide the necessary feedback for that action.

4. Manage incidents related to the deployed software.

Development and Operations teams are two wheels of the chariot. If one is slow, the entire chariot does not become Agile. If the Development team does rapid increments to a product but if the Operations team takes long to deploy these increments, the benefits of agility shown by Development team cannot be accrued to the customer. In this article, I will show with examples how Azure can help Operations team also to become Agile.

This article is published from the DNC Magazine for .NET Developers and Architects. Subscribe to this magazine for FREE (NO credit card needed) and download all previous and current editions

Case Study

Let us first take a simple example. A case where your team is given a task of creating a Proof-Of-Concept (POC) of a web application so that customer can view that POC and give budgetary sanctions for full-fledged application. You have decided that you will use Visual Studio to develop that POC, Visual Studio Online to do the source control with build and you will use Azure service to create a web application.

Let us start by creating that web application say called as “SSGSEMSWebApp”. This application will have the domain of azurewebsites.net which is OK for us since it is only a POC. Once the application is provisioned, we will configure it to be deployed from source control by integrating it with VSO source control.

One assumption here is that we have already created a team project on VSO to integrate with this Azure web application. Right now there is nothing under the source control of that team project but we can link that with our web application. The name of that team project is say “SSGS EMS Demo”.

While adding this source control link, we will have to authorize it by logging on to our VSO subscription. Finally we select the team project name from dropdown as the repository to deploy.

Now we can open Visual Studio 2013 and create the actual ASP.NET web application. While creating it, we can chose to put it under the source control of SSGS EMS Demo team project as well as to host it in the cloud as a website. We can give the name of the application that we have created on Azure.

Once the application is ready in Visual Studio, we can do a check-in. You will observe that a build automatically starts as part of the Continuous Integration. This build definition is automatically created when we associated our Azure web application with the source control of this project.

One more interesting thing is that as part of the continuous integration, it also starts the deployment process as soon as the build is successfully over. It means that we get CICD – Continuous Integration Continuous Deployment as a bonus when we use Azure as the hosting provider and VSO as the source control of our application.

This page will keep on showing the deployments that happen as part of CICD. We can even swap the deployments - if a new version is not working as expected, we can get the old version to be deployed with a single click of the mouse.

Operations team not only deploys but also monitors the application for quality of service. This is made simpler by a service that is provided in Azure. Name of that service is Application Insights. It collects telemetry data like availability, performance, errors etc. and allows us to view reports of that for applications hosted anywhere. They may be hosted in Azure or on a web server on premise or even running in Visual Studio 2013 for the data to be collected by Application Insights. For this data to be collected, the ASP.NET web application needs to have the App Insights SDK installed. It is a Nuget package that can be installed while creating the project, or even afterwards from the Solution Explorer of Visual Studio.

Once the application starts running, telemetry data is collected and we can view it in the App Insights page of the new Azure portal.

It shows data related to availability, failures, overall performance, server performance, client side performance, usage patterns, User information, session information, page views and many others. We can drill down into each section to view details of the data.

Now that POC is shown to stakeholders and telemetry data has justified the application to be fully created, there are some constraints that are going to come. Let us list a typical set of constraints that operations team may encounter:

- Application will be hosted in actual VMs in Azure and not on the Web Application service of Azure

- There are stages where application will be tested in test environment, then in the preproduction environment UATs will be carried out and finally it will be deployed on the production environment.

- It will now be source controlled on on-premises TFS and built there only

- The environments that are required for hosting are in Azure. Each environment may contain multiple VMs.

- There has to be an approval from competent authority for promotion of the build from one environment to next.

In this case, it becomes appropriate to use Microsoft Release Management 2013. It can work with TFS or VSO. It can deploy software on machines on-premises or VMs in Azure. It can create and use multiple environments or multiple machines and those environments can be used in multiple stages that are part of the release path. Microsoft Release Management 2013 has all the necessary tools for deployment. It can do simple XCOPY deployment and can create web application with its own app pool. It can use DACPAC to do the deployment of SQL Server database and can also call PowerShell Desired State Configuration (DSC) scripts to do its tasks on a target machine. Let us now see how we can configure that.

First we have to create picklist of stage names that we will be using. For example: Testing, Pre-Prod and Production. Now we will create environments with similar names. For that we have to provide the subscription details of Azure since we are creating the environments that are made up of Virtual Machines in Azure. One thing to remember is that all VMs that are to be part of same environment, have to be in the same Cloud Service. In fact, when we select Azure as the target for environments, the wizard shows the names of the Cloud Services in our subscription as possible candidates to create environments.

Now we can create the release path that depicts the stages in the serial order in which the deployment has to take place.

You may have observed that for each stage there is a provision to add an approval step and give an approver name. This ensures that deployment on the next stage will happen automatically only when the approver has given an approval.

Final step is to create a release template where for each stage, we can configure which deployment actions have to take place and which tools are to be used for those actions. For Azure VMs, we are going to use PowerShell DSC script. We have to keep that as part of the project and then provide necessary details to the deployment step.

Once the template is ready, we can now either trigger the release manually or we can configure CICD with the help of build that is done using a custom build template that gets installed when Release Management is installed on TFS.

Deployment in a Mixed Environment (Linux and Windows VM’s)

Next case that we are going to study is where deployment has to take place in a mixed environment containing some Linux VMs in addition to a Windows VM. Azure supports many templates of Linux to create VMs from them. What we want to do as part of deployment scenario, is the following:

1. Create an image of basic Linux VM

2. Provision it with some basic software such as Apache, MySQL and PHP. After provisioning we would like to store that VM for use and when required.

3. Once our application is ready, we would like to recreate the instance of that Linux VM

4. Finally, deploy the appropriate component of the application on it.

We can achieve this with the help of some open source tools that are supported on Azure. The first task of creating a basic Linux VM and then converting it into an image can be done using Azure Command Line Interface – CLI. Azure CLI is a set of open source commands that can do almost all tasks that you can execute from Azure Portal. We can use it to create a VM from standard Linux template and then store it as an image in the Azure Store.

Provisioning of VMs in Azure can be done using other open source tools. Two of the most popular tools in that are – Chef and Puppet. Both of these tools have similar capabilities. The concept behind working of these tools is Desired State. Each object on the VMs is treated as a resource and can have a desired state. Machine, OS, Users, Groups, Software, Services et al are treated as resources. From basic state to desired state, migration is achieved using scripts and inbuilt abilities of these tools.

Chef expects the desired state of the VMs to be described in scripts written in RUBY. These scripts are stored at a centralized location which by default is Chef Service that is available online. You may also optionally download and install Chef server on premises. This service has a management console that allows us to create the organization and download a starter kit containing RUBY script to start with.

We can extend these scripts to describe the desired state of the VMs. In the screenshot you may see that the knife tool is using the .publishsettings file of my subscription to connect to it and access VM under that.

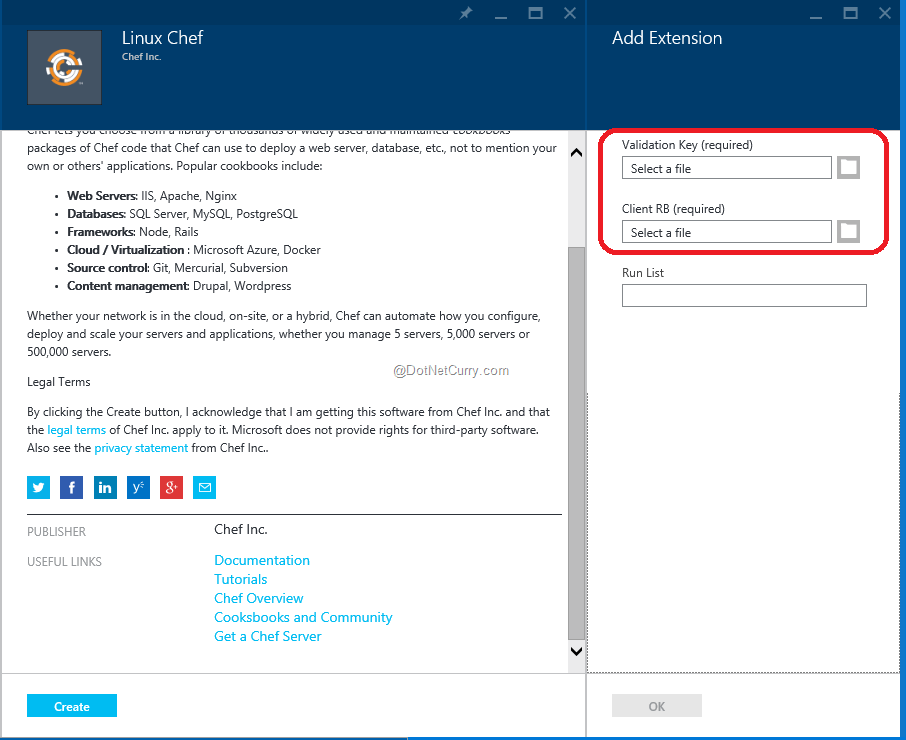

On the virtual machine, we need the Chef Extension installed. Azure helps us install that on a VM that is being created.

It now asks for client.rb file that is created earlier.

The extension once installed will query the management service to get the DSC and implement it accordingly on the VM.

Puppet works on a similar concept but it has a server part of the software that has to be installed on one of our machines. It is called the Puppet Master. Azure provides a VM template for Puppet Master.

Azure also provides the Puppet Agent to be installed on the VMs to be provisioned. We may also install the add-in provided by Puppet Labs to start creating the configuration scripts in Visual Studio.

Our script can be put on the Puppet Forge (a shared resource repository) using the same add-in in Visual Studio, and then can be used from a ssh command prompt of target machine.

Both Chef and Puppet can provision existing and running VMs. They cannot create a VM from an existing image. That can be done by another open source tool named Vagrant. It uses an image that is converted from an existing VM and then applies the configuration that is provided in a text file named VagrantFile. Microsoft Openness has recently created a provider for Azure so Vagrant can target creating VMs on Azure.

Conclusion

In this article we have seen various ways in which Azure supports the tasks of operations team as part of DevOps. By doing the automation of Continuous Integration Continuous Delivery (CICD), by creating the VMs and provisioning them, by automating the deployment of various components of your software on different machines, the agility of operations team that uses Azure services, can be increased to bring it in line with the development team.

This article has been editorially reviewed by Suprotim Agarwal.

C# and .NET have been around for a very long time, but their constant growth means there’s always more to learn.

We at DotNetCurry are very excited to announce The Absolutely Awesome Book on C# and .NET. This is a 500 pages concise technical eBook available in PDF, ePub (iPad), and Mobi (Kindle).

Organized around concepts, this Book aims to provide a concise, yet solid foundation in C# and .NET, covering C# 6.0, C# 7.0 and .NET Core, with chapters on the latest .NET Core 3.0, .NET Standard and C# 8.0 (final release) too. Use these concepts to deepen your existing knowledge of C# and .NET, to have a solid grasp of the latest in C# and .NET OR to crack your next .NET Interview.

Click here to Explore the Table of Contents or Download Sample Chapters!

Was this article worth reading? Share it with fellow developers too. Thanks!

Subodh is a Trainer and consultant on Azure DevOps and Scrum. He has an experience of over 33 years in team management, training, consulting, sales, production, software development and deployment. He is an engineer from Pune University and has done his post-graduation from IIT, Madras. He is a Microsoft Most Valuable Professional (MVP) - Developer Technologies (Azure DevOps), Microsoft Certified Trainer (MCT), Microsoft Certified Azure DevOps Engineer Expert, Professional Scrum Developer and Professional Scrum Master (II). He has conducted more than 300 corporate trainings on Microsoft technologies in India, USA, Malaysia, Australia, New Zealand, Singapore, UAE, Philippines and Sri Lanka. He has also completed over 50 consulting assignments - some of which included entire Azure DevOps implementation for the organizations.

He has authored more than 85 tutorials on Azure DevOps, Scrum, TFS and VS ALM which are published on

www.dotnetcurry.com.Subodh is a regular speaker at Microsoft events including Partner Leadership Conclave.You can connect with him on

LinkedIn .